Table of Contents

- I. The Technical Problem: Engineering Realistic Physical Logic

- II. The Core Pillars of the Seedance 2.0 Architecture

- III. Technical Guide: The 5-Step API Integration Workflow

- IV. Competitive Deep Dive: Automation Efficiency Gap

- V. FAQ and Industry Positioning

- Summary: A Video API First Approach

On April 22, 2026, Arena (formerly LMArena), the gold standard in artificial intelligence benchmark evaluation, released its long-awaited video generation leaderboards. The results were categorical: Seedance 2.0 established itself as the new State-of-the-Art (SOTA), capturing the absolute #1 ranking in all three premier categories—Text-to-Video, Image-to-Video, and Video Editing.

A decisive sweep. No other model tied for first.

While ByteDance has been a consistent innovator in AI, securing the top spot across every single evaluated track is a landmark achievement, forcing the industry to recalibrate its expectations. In this report, we will analyze the technical differentiators that make Seedance 2.0 the current market leader and provide a technical guide on integrating this new capability into your software stack via API.

I. The Technical Problem: Engineering Realistic Physical Logic

For developers and creators utilizing AI video, several “immersion killers” have defined the previous generation of models:

- Physically Unnatural Motion: Subjects sliding or floating rather than walking with realistic gravity.

- Inconsistent Coherence: Significant stylistic drifting between consecutive scenes or frames.

- Subject Deformation: Faces or objects morphing unexpectedly during generation.

- Audio-Visual Disconnect: Desynchronized sound effects and mismatched lip-sync.

The bottleneck has been architectural: most models are merely generating frame-by-frame pixels rather than simulating a physical world. Seedance 2.0 represents a shift from generative image sequences to generative physical simulation.

II. The Core Pillars of the Seedance 2.0 Architecture

1. Unified Multimodal Input Architecture

Unlike pipelines that rely solely on text prompts, Seedance 2.0 uses a unified architecture that processes four input modalities simultaneously:

- Text (Semantic Prompts)

- Images (Identity/Composition References)

- Video Clips (Motion continuity materials)

- Audio (Mood and sound effect tracks)

By ingesting these four vectors in parallel, the model ensures that the subject’s appearance (image) matches the desired motion path (video), while the emotional tone (audio) aligns with the scene’s lighting (text). This is closer to how human directors operate.

2. Native, Generative A/V Sync

Traditional video workflows treat audio and visual generation as separate processes that must be aligned post-hoc. Seedance 2.0 is generative: audio and video are generated simultaneously in a synchronized manner.

The output contains accurately aligned lip-sync, footsteps, and collision sound effects, with the audio track locked to the video frame-by-frame, removing the need for secondary synchronization processing.

3. Parameterized Camera Control

Seedance 2.0 incorporates cinematic camera control directly into its API. Each movement is a controllable parameter:

- Dolly In/Out: Controls the speed and depth of forward/backward camera motion for emotional emphasis.

- Pan/Tilt: Manages the horizontal and vertical rotation of the viewpoint.

- Tracking: Follows a specific subject with precise velocity control.

III. Technical Guide: The 5-Step API Integration Workflow

Integrating Seedance 2.0 (via Volcengine/Ark) into your existing application pipeline follows a streamlined, 5-step process.

- Define Type: Select the desired generation mode (Text-to-Video, Image-to-Video, or Video Continuity).

- Asset Preparation: Gather necessary reference materials (e.g., character images, specific audio mood tracks).

- Prompt Engineering: Structure the textual input following the format: Subject + Scene + Camera Motion + Style.

- API Execution: Call the generation endpoint with your parameters.

- Composite & Export: Finalize the render of the frame-locked A/V file.

Python API Call Template (Text-to-Video)

Python

import requests

import json

# Replace with your authenticating details

API_KEY = "your-volcengine-api-key"

# Endpoint for Ark Beijing Region (Volcengine/Ark)

URL = "https://ark.cn-beijing.volces.com/api/v3/seedance/video/generate"

# Core payload definition

payload = {

"model": "seedance-2.0-pro", # Options: pro (quality) or flash (speed)

"input": {

"prompt": "A professional wide-angle shot of a technology conference lobby at night, dynamic volumetric lighting, people networking in the background, cinematic 2.35:1 aspect ratio, clean modern architecture, f/2.2 shallow depth of field.",

"negative_prompt": "blurry, low resolution, distorted faces, unnatural camera movement, technical glitch",

"duration": 10, # Options: 5 or 10 seconds

"resolution": "1080p", # Options: 720p, 1080p, 2K

"fps": 24,

"camera": {

"type": "dolly_in",

"speed": "slow",

"stability": "high"

}

}

}

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

# Execute the asynchronous generation request

response = requests.post(URL, json=payload, headers=headers)

print(response.json())

IV. Competitive Deep Dive: Automation Efficiency Gap

| Feature | Manual Web UI Generation (Consumer Tool) | API Automated Pipeline |

| Workflow | Serial (Login, Upload, Prompt, Generate) | Parallel (Batch Processing, Asynchronous) |

| Scalability | Non-existent | High; capable of thousands of unique variations |

| A/V Sync | Manual Post-Production required | Native; generated pre-synchronized |

| Integration | Standalone | Hooks directly into existing CMS, ERP, or web app |

Real-World Efficiency Test: Creating a batch of 10 customized product marketing videos dropped from 6 hours (manual process) to 20 minutes (automated API processing).

V. FAQ and Industry Positioning

- Q: Is the API ready for commercial deployment?

- Videos generated via the Volcengine API are commercial-ready and watermark-free by default.

- Q: How does Seedance 2.0 position itself against Pika or Runway Gen-2/3?

- Currently, Seedance 2.0 demonstrates a clear advantage in motion consistency, native camera parameter control, and audio-visual synchronization, particularly for complex human and interaction scenes.

- Q: How will the market change with Alibaba’s impending “Happy Horse” (ATH) API release on April 27?

- The video generation market is rapidly maturing into a duopoly between the two giants (ByteDance vs. Alibaba). While competition is accelerating, Seedance 2.0’s current ecosystem integration (especially with CapCut Pro) and its SOTA benchmark performance provide a robust short-term moat.

Summary: A Video API First Approach

Seedance 2.0’s domination on the Arena leaderboards is not merely a milestone; it is proof that generative video is shifting from a consumer curiosity to a foundational technology stack. It demonstrates that the barrier to professional-grade motion content is no longer a massive production budget—it is an API key.

The API era of generative video has arrived. Whether you are building an e-commerce platform that dynamically generates product videos, or an enterprise marketing suite, Seedance 2.0 provides the technical SOTA required to scale.

Still Having Trouble?

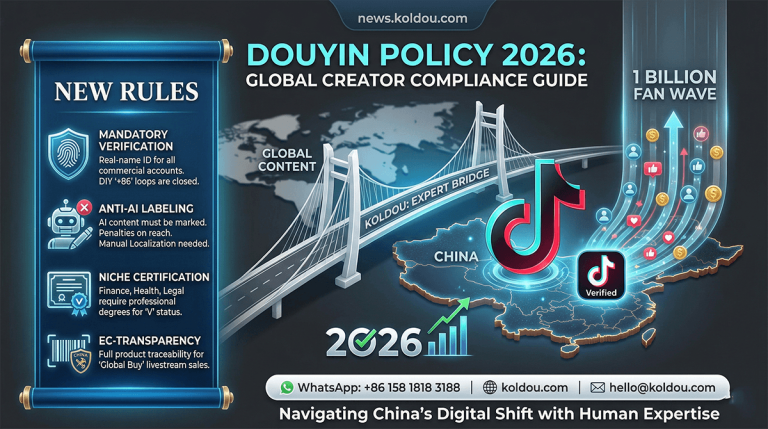

If you're stuck with registration, verification, or accessing Chinese apps like Douyin, you're not alone.

I offer professional, paid support to help you get everything set up quickly and correctly.